by K. R. Thórisson

A new IIIM report argues that contemporary AI is a threat to

democracy, freedom, and the national sovereignty of the Nordics.

Titled Better Machine Intelligence: A Vision for Nordic AI Leadership, the extensive new Tech Report by IIIM released today outlines the limitations of contemporary AI and proposes a way forward for small-data nations like the Nordics and Baltics.

Titled Better Machine Intelligence: A Vision for Nordic AI Leadership, the extensive new Tech Report by IIIM released today outlines the limitations of contemporary AI and proposes a way forward for small-data nations like the Nordics and Baltics.

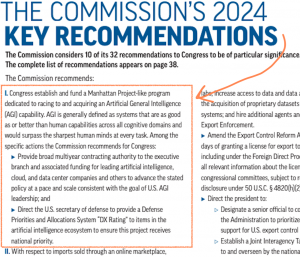

The report starts with a comprehensive explanation of the limitations of contemporary AI, followed by a short overview of general intelligence. These sections together provide evidence for the paper’s conclusion, laid out in its last section, that small-data nations like the Nordics and Baltics must seek an alternative to contemporary AI provided by the U.S. tech giants: Small-data AI.

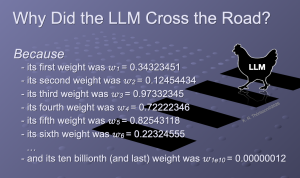

Contemporary AI — based on artificial neural networks (ANNs), reinforcement learning (RL), and a variety of machine learning (ML) methods, are used to create many of the modern AI products and services that have been brought forth recently. When combined with standard software techniques, the result is generally referred to as “AI” or “genAI”. ANN-based technology has significant limitations, due to its statistical foundation, that are lost on the vast majority of their users. As a result, many believe the hype that this technology will take the jobs of a large swath of the population. While this might happen with future AI technologies, it cannot happen yet because most tasks cannot be performed by statistical information alone.

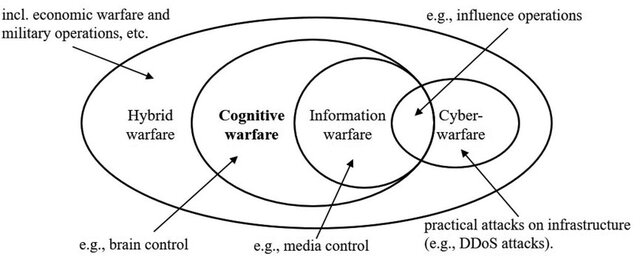

The paper argues that contemporary AI is not only inadequate for many of the applications it is being tested for, but that it is in fact a threat to society, due to its inherent opaqueness. This opaqueness makes it difficult to govern. The solution, outlined in the last part of the paper (A New Vision for Nordic AI Research), is to pivot away from contemporary AI as much as possible and increase progress towards transparent AI technologies that already exist in research labs in Europe and elsewhere.